Automating Stem Separation with Google Colab and Demucs

Lately, as I dive back into music production, I found myself needing a fast, reliable way to source, separate and prepare audio samples. Instead of relying on expensive, subscription-based vocal removers, I decided to build my own.

I developed a minimal audio pipeline that takes a YouTube link (or raw MP3 files), extracts the highest quality audio and uses a neural network to split the track into isolated stems: drums, bass, vocals and instruments.

Everything runs on Google Colab, utilizing free cloud GPUs and connects directly to my Google Drive where the files land permanently. I even exposed the control panel through an ngrok tunnel, allowing me to queue up tracks directly from my phone. It operates strictly as a private, short-lived cloud tool that I spin up exactly when I need it.

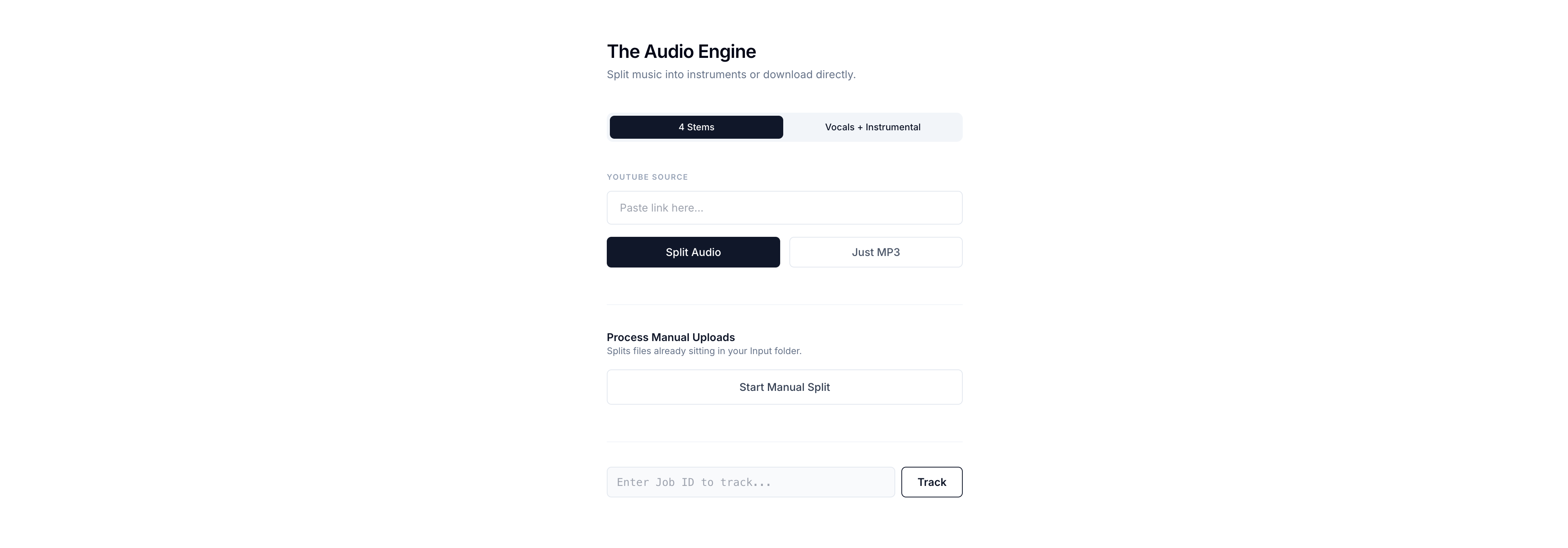

The Audio Engine

Tool

A minimal audio engine built on Google Colab that automates YouTube retrieval and neural stem separation directly to Google Drive.

The Setup: A Modular Cloud Architecture

The architecture of this pipeline is deliberately decoupled to maximize efficiency.

- The Control Surface: My smartphone.

- The Compute Engine: Google Colab (providing heavy GPU acceleration).

- The Storage Layer: Google Drive (for permanent, secure file hosting).

To make this run smoothly on Colab’s dynamic PyTorch environment, I bypass strict dependency constraints. I install yt-dlp for YouTube audio extraction and pull demucs directly from its GitHub repository to ensure the latest compatibility.

!pip install -q torch torchaudio torchvision

!pip install -q --no-deps git+https://github.com/adefossez/demucs

!pip install -q dora-search julius lameenc openunmix yt-dlp flask pyngrok

Managing Secrets Safely

Handling paths and API tokens entirely within the Colab environment prevents hardcoding sensitive data. The script dynamically pulls API keys and Drive paths from Colab's internal userdata vault.

from google.colab import userdata

from pathlib import Path

from dataclasses import dataclass, field

def get_secret_path(key: str) -> Path:

"""Retrieves a secret and converts to Path, failing with a clear error if missing."""

value = userdata.get(key)

if value is None:

raise ValueError(f"❌ Secret '{key}' is missing! Please add it in the Secrets tab and enable notebook access.")

return Path(value)

@dataclass

class DemucsConfig:

model: str = "htdemucs_ft"

mp3_bitrate: int = 320

raw_audio_input_path: Path = field(default_factory=lambda: get_secret_path('DEMUCS_IN_PATH'))

separated_stems_output_path: Path = field(default_factory=lambda: get_secret_path('DEMUCS_OUT_PATH'))

youtube_downloads_path: Path = field(default_factory=lambda: get_secret_path('YOUTUBE_OUT_PATH'))

cfg = DemucsConfig()

Retrieving Audio via YouTube and yt-dlp

The pipeline starts with the source material. By pasting a YouTube link into the custom web interface, the system uses yt-dlp to fetch the highest available audio stream. It immediately converts the stream into a crisp 320kbps MP3 directly inside a designated Google Drive folder.

import subprocess as sp

def download_youtube_audio(url: str, download_folder: Path):

download_folder.mkdir(parents=True, exist_ok=True)

filename_template = str(download_folder / "%(title)s.%(ext)s")

cmd = [

"yt-dlp", "--no-playlist", "-f", "bestaudio", "-x",

"--audio-format", "mp3", "--audio-quality", "0",

"-o", filename_template, url

]

sp.run(cmd, check=True)

Neural Stem Separation with Demucs

Once the audio lands safely in Google Drive, the system triggers the Demucs deep learning model. Specifically, I use the htdemucs_ft configuration, which deeply analyzes frequency patterns to physically isolate the track into precise stems.

I execute this as a subprocess, strictly enforcing GPU acceleration (-d cuda). This reduces processing time from several minutes down to mere seconds. Upfront, I can decide the separation mode:

- 4-Stem Split: Vocals, Drums, Bass and Other.

- 2-Stem Split: Vocals and Instrumental (perfect for quick instrumental beds).

def run_demucs_separation(input_dir: Path, output_dir: Path, mode: str = "4stems"):

audio_files = [f for f in input_dir.iterdir() if f.suffix.lower() in [".mp3", ".wav"]]

cmd = ["python3", "-m", "demucs.separate", "-o", str(output_dir), "-n", cfg.model, "-d", "cuda", "--mp3", f"--mp3-bitrate={cfg.mp3_bitrate}"]

if mode != "4stems":

cmd.append(f"--two-stems={mode}")

cmd.extend([str(f) for f in audio_files])

sp.run(cmd, check=True)

Background Threading for Mobile Access

Because AI stem separation is a heavy machine learning task, running it synchronously would instantly freeze the Flask control panel.

To solve this, I handle all separation jobs via background threading. When I submit a URL, the UI immediately returns a Job ID, while the Colab GPU silently processes the audio in the background. I expose this local Flask server to the internet using an ngrok tunnel. This grants me a public URL that I can open on my phone, allowing me to queue up audio jobs even while I am away from my desk.

from flask import Flask, request

from pyngrok import ngrok

import threading

import uuid

import shutil

app = Flask(__name__)

active_jobs = {}

@app.route("/process_url")

def process_url():

url = request.args.get("url")

mode = request.args.get("mode", "4stems")

job_id = str(uuid.uuid4())

active_jobs[job_id] = "queued"

def worker():

temp_folder = Path(f"tmp_{job_id}")

active_jobs[job_id] = "downloading"

download_youtube_audio(url, temp_folder)

active_jobs[job_id] = "separating"

run_demucs_separation(temp_folder, cfg.separated_stems_output_path, mode)

shutil.rmtree(temp_folder)

active_jobs[job_id] = "completed"

threading.Thread(target=worker).start()

return {"job_id": job_id}

if __name__ == "__main__":

ngrok.set_auth_token(userdata.get('NGROK_AUTH_TOKEN'))

tunnel = ngrok.connect(5000, domain=userdata.get('NGROK_DOMAIN'))

print(f"Public URL: {tunnel.public_url}")

app.run(port=5000)

Manual Control & Embracing the Cloud's Limitations

Not every sample comes from YouTube. If I drop arbitrary WAV or FLAC files directly into a designated Input folder in Google Drive, I can hit a separate /separate_manual endpoint on the mobile UI. The pipeline scans the directory and automatically processes the manually injected queue.

Good Enough for What I Need

Google Colab sessions shut down after inactivity. For most web apps, that is a problem. In this setup, it works in my favor.

I spin up a session, process the audio from my phone, then let it time out. It is not elegant, but it gets the job done. The outputs, including raw files and separated stems, stay stored in Google Drive, which is all I really need.

Running something like this on always-on infrastructure would mean paying for a server with a GPU sitting idle most of the time, which does not make much sense for occasional use. This approach avoids that completely.

It is a bit quick-and-dirty solution, but for my use case, it's enough. A lightweight pipeline that does the heavy work when needed, then disappears without costing anything while idle.